Everyone’s Building Agents. Most Shouldn’t Be.

Every other LinkedIn post is someone announcing they built an AI agent over the weekend. Every AI newsletter has a tutorial. The FOMO is thick enough to cut with a CLI command.

And look — the market is real. The global AI agents market is projected to hit $47.1 billion by 2030, growing at a 45.8% CAGR. Money is moving. But “the market is growing” and “you specifically need this right now” are two very different statements.

Here’s what I keep seeing: SEO professionals and marketing directors buying solutions to problems they don’t actually have. They’re building an SEO AI agent because everyone else seems to be — not because they’ve identified a specific, recurring workflow that justifies the investment. That gap between “this is interesting” and “this will make my team measurably more effective” is where most of the wasted time lives.

So let’s figure out where you actually fall.

What You’re Actually Looking At: Prompting vs. Multi-Step LLM Execution vs. Real Agents

Here’s the fastest way I can explain this without giving you a textbook definition:

When you ask Claude to audit your title tags and it gives you a list — that’s prompting. When you set up a Claude Code session that crawls your sitemap, pulls your titles, checks character counts, and flags issues in sequence — that’s multi-step LLM execution. When a system does that every Monday at 6am, logs changes, remembers last week’s results, decides what to escalate, and pings you on Slack only when something actually broke — that’s an agent.

Most of what’s being sold as “AI agents” right now is step two dressed up as step three.

| Prompting | Multi-Step LLM Execution | Real Agent | |

|---|---|---|---|

| What it looks like | You ask Claude a question, it answers | You set up a session that runs multiple tasks in sequence | A system runs autonomously on a schedule, makes decisions, logs results |

| Memory | None between sessions | Session-only context | Persistent memory across runs |

| Decision-making | You decide what to ask next | You define the sequence, LLM executes | Agent decides what to do next based on prior results |

| Human involvement | Every step | Setup + review | Setup + monitoring |

| Example | “Audit these 10 title tags” | Claude Code crawls sitemap → pulls titles → flags issues | System runs Monday 6am → compares to last week → escalates only new issues to Slack |

| Who needs this | Everyone using AI for SEO | Power users with repetitive multi-step workflows | Teams running high-volume, recurring tasks across multiple sites |

The Social Media Hype Pattern

Let’s name what’s happening without pretending it’s not: “I built an AI agent in 10 minutes” threads get 10x the engagement of “here’s how I improved my prompting workflow.” The incentive structure rewards calling everything an agent. OpenAI, Anthropic (who just renamed the Claude Code SDK to the Claude Agent SDK), and every tool vendor benefits from the agent narrative — it sells subscriptions and API usage.

Most people selling you an AI agent course are teaching you how to prompt an LLM in a loop. That’s not an agent — that’s a workflow with extra steps. And there’s nothing wrong with a workflow. The problem is paying agent prices for prompt-library value.

Here’s the filter: if the “agent” requires you to sit there and prompt it through every step, it’s not an agent. It’s you, with a good tool.

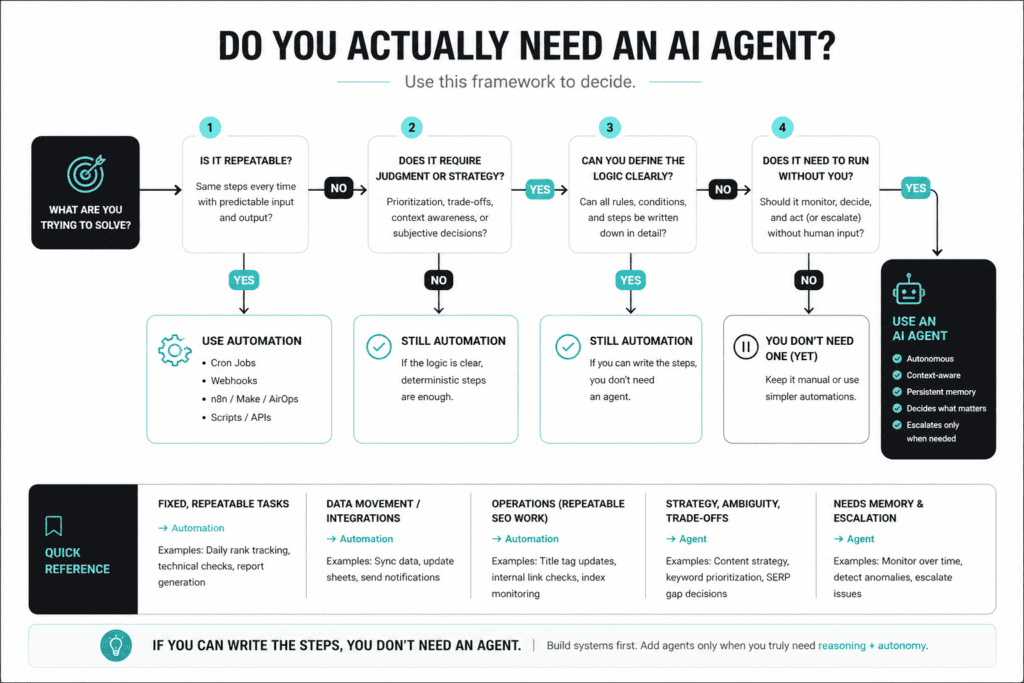

The Decision Framework: Do You Actually Need One?

Instead of telling you what to buy, here’s a framework to figure it out yourself. Be honest with your answers — this only works if you don’t bullshit yourself.

| Criteria | You Need Better Prompting | You Need a Structured LLM Workflow | You Actually Need an Agent |

|---|---|---|---|

| Repetitive task volume | A few tasks per week | Daily multi-step tasks | High-volume, recurring tasks across 10+ sites/clients |

| Technical comfort | Can use ChatGPT/Claude chat | Comfortable with Claude Code, API calls, or n8n | Can write Python, build custom workflows, manage infrastructure |

| Runs without you? | No — you’re always in the loop | Partially — you set it up, review output | Yes — runs on schedule, decides what matters, escalates exceptions |

| Maintenance tolerance | Zero — plug and play | Low — update prompts/workflows monthly | High — maintain code, debug failures, iterate on logic |

| Budget | $0–$20/mo (subscription tier) | $20–$100/mo (API + tools) | $100+/mo (API, hosting, monitoring, dev time) |

| Honest recommendation | Build a prompt library + saved Claude Projects | Set up a complete SEO automation stack | Build or commission a real agent system |

The honest test: if you can describe your workflow in under five steps and it doesn’t need to run without you — you don’t need an SEO AI agent. You need a better prompt library, maybe a Claude workflow setup, and the discipline to use them consistently.

Most people land in column one or two. That’s not a failure — that’s efficiency.

What I’ve Actually Built (And Why)

I’m not writing this from the sidelines. I built a Technical SEO agent using Claude Code that runs recurring audits across client sites — crawl analysis, indexation checks, schema validation, the repetitive stuff that used to eat 3–4 hours of my week.

Let me be honest about it: it took real engineering time. It broke multiple times during development. Maintaining it is a recurring cost — not just in API spend, but in debugging when something upstream changes. This isn’t a weekend project. It’s infrastructure.

The payoff? What used to take hours now runs in minutes without supervision. For my workflow and client volume, the ROI cleared the bar. But for a solo practitioner running SEO for two or three sites? It wouldn’t. And I’d tell them that directly.

Your Two Paths (If You Actually Need One)

If you’ve honestly gone through the framework above and you’re in that right column — here’s how to actually move forward. Two valid paths, neither one wrong.

Path A — The Accessible Route: Claude Code + Managed Platforms

Use Claude Code or the Claude Agent SDK for multi-step execution without building from scratch. Anthropic’s SDK gives you the same tools, agent loop, and context management that power Claude Code — programmable in Python and TypeScript.

Best for: practitioners who want agent-like behavior without maintaining custom infrastructure. You’re trading control for speed-to-deploy.

Path B — Build From Scratch: Python / n8n / Custom Architecture

Build your own agent loops with persistent memory, decision-making, and autonomous execution. Connect your own data sources, define your own logic, own the entire system.

Best for: technically deep practitioners who want to understand what’s under the hood. If you want to actually learn how AI agents for SEO work — not just use them — this is your path.

The tradeoff between paths isn’t about which is “better.” It’s about whether you want to USE agents or UNDERSTAND them. If your goal is operational efficiency, Path A gets you there faster. If your goal is building a competitive advantage through proprietary automation, Path B is the investment. For a full breakdown of the best SEO automation tools across both paths, I’ve got a separate deep dive.

Frequently Asked Questions

What’s the difference between an AI agent and an AI assistant?

An assistant responds to your prompts one at a time — you drive every step. An agent operates autonomously with persistent memory, its own decision-making, and the ability to run tasks without you being present. Most tools marketed as “agents” are actually sophisticated assistants.

How much does it cost to run an SEO AI agent?

API costs for a moderate-volume agent running daily audits across 5–10 sites typically run $50–$200/month. Add hosting, monitoring, and your time maintaining it, and the real cost is significantly higher than a subscription tool. According to a First Page Sage study, the average time savings with agentic AI is 66.8% — but that only matters if the tasks you’re automating justify the spend.

Will Google penalize content produced by AI agents?

No. Google’s official position is clear: they evaluate content on helpfulness and E-E-A-T signals, not production method. What they penalize is scaled content abuse — low-quality, mass-produced pages designed to manipulate rankings, regardless of whether a human or AI produced them.

Sources & References

- Warmly — “AI Agents Statistics: 2026 Report“

- First Page Sage — “Agentic AI Statistics: 2026 Report“

- Anthropic — “Building Agents with the Claude Agent SDK“

- Anthropic — “Enabling Claude Code to Work More Autonomously“

- Anthropic — Claude Agent SDK Documentation

- Google Search Central — “Google Search’s Guidance About AI-Generated Content“

🤖 Transparency Note This article was drafted with AI assistance and reviewed, edited, and fact-checked by a human.